This is the first installment in a 3-article series covering the genesis and maintenance of the National Board Exam for Optometry (the Exam). There are three individual parts of the National Board Exam (Part I – Applied Basic Science, Part II – Patient Assessment and Management and Part III – Clinical Skills Exam), but this article series will discuss the Exam as a whole. The following information should be helpful in providing a basic understanding of this essential tool for ARBO’s member boards.

The Exam plays an important role for our state and provincial board members. It is an essential tool that allows ARBO member boards to judge entry-to-practice competency for licensure. But more than a tool, the Exam is a living entity that must constantly change and update itself in order to adapt to the ever-shifting optometric environment.

We all know of the Exam. Boards rely on it, candidates sweat over it and a cadre of councils, committees, dedicated optometrists and a leadership organization (NBEO) create it, mold it, organize and constantly update it. The result is a current, comprehensive, relevant and fair exam that stands as a pillar of optometric public protection.

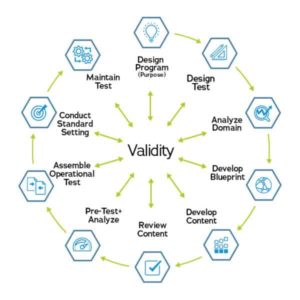

So what exactly goes into the planning required to produce this examination? The National Board of Examiners in Optometry (NBEO) uses a ‘validity-centered’ approach when developing the exam.

Validity is the degree to which theory and evidence supports the intended uses and interpretations of test scores. Every step in the exam process is centered around validity.

Next, we will discuss who is involved in the development of the Exam and discuss the first few elements in Figure 1.

Who is involved in the Exam development?

-

Figure 1: Test Development and Maintenance NBEO staff are responsible for the day-to-day operation and administration of the program.

- Subject Matter Experts (SME) Committees – Optometric professionals and educators of new professionals provide content expertise necessary for many of the test development steps.

- Independent Assessment Professionals – These are the psychometric professionals that help to train SMEs in test development processes and provide technical consultation and review.

Designing the program and the test

Questions that guide these important initial steps include:

- What is the purpose of the test?

- What format should the test be?

- How will the test be administered

The primary purpose of the Exam is to accurately identify candidates with the knowledge, skills and abilities needed for safe and effective entry-level optometry practice. The Exam format is multiple-choice. The administration is computer-based at Pearson VUE testing centers for Part I and Part II. Part III is administered as a clinical exam on-site at the National Center of Clinical Testing in Optometry (NCCTO®) located in Charlotte, North Carolina.

Analyze the domain and develop the blueprint

Questions to guide this process include:

- What knowledge/skills/abilities should the test address and at what cognitive complexity level?

- How many test items should be devoted to each content area?

The content of the exam items must match the knowledge/skills/abilities necessary for the safe and effective entry-level practice (i.e., the “job-relatedness” of the exam) to ensure the validity of test score interpretations. SMEs – Clinical optometric professionals and educators of new professionals – are heavily involved in this process.

Focus groups of SMEs convene to identify important content areas based on their professional experience. The results of the focus groups are converted into surveys, which are distributed to a large sample of optometric professionals for feedback. This feedback helps to identify the most important knowledge/skills/abilities necessary for safe and effective entry-level practice.

Survey information is used to create and update the Exam Content Matrix – a document shared with candidates and other constituents that specifies the content areas eligible for inclusion on the exam and the relative weight given to each area. Survey feedback is used to develop and verify that the content of the Exam Content Matrix remains appropriate over time. The formal process of identifying the relevant exam content and determining the proportional representation of this content on the exam is referred to as a job task analysis.

Article 2 of this article series will discuss

- Developing and reviewing content

- Pretest, analysis and assembling the operational test

Article 3 will cover

- Standard setting

- Maintaining the test